![[Translate to english:]](/fileadmin/_processed_/2/7/csm__c__AdobeStock_ipopba_323829966_9d266996f8.jpeg)

Transparency und acceptance of self-x-systems The acceptance of autonomous, digitalised systems also depends on their ability to explain decisions in a transparent and comprehensible way. Furthermore, they should only use the necessary data and information for decisions and protect the privacy of the users. Proof of desirable system properties is also an important building block for building trust in self-x systems.

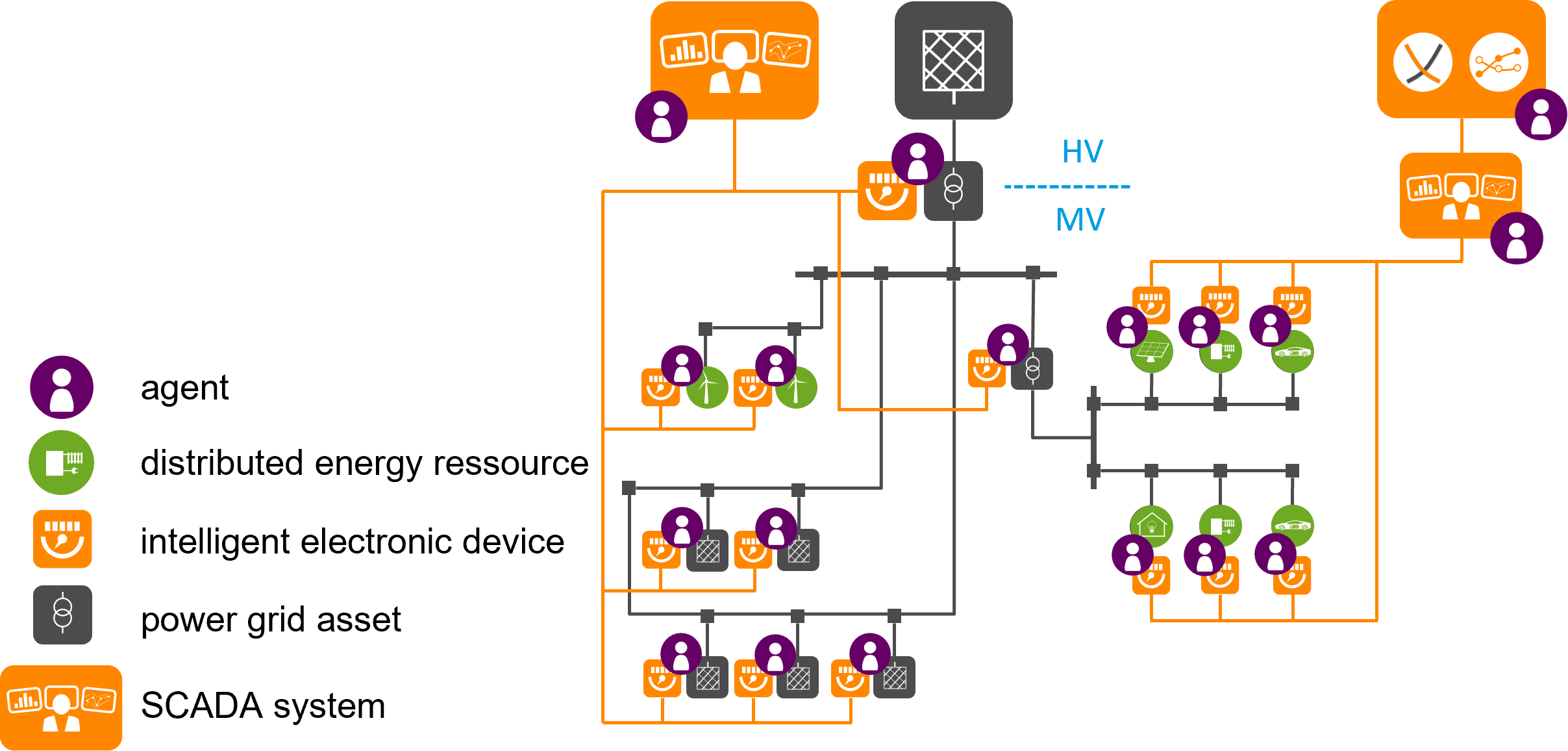

In self-x systems, intelligent agents make autonomous decisions that not only change the state of the cyber-physical system but can also have an impact on the human users. In our work on digitalised energy systems, a single agent usually monitors and controls an individual decentralised energy system - such as a battery storage unit, a heat pump or the flexible loads of a household - or a single controllable element of the energy system - such as a controllable local network station or a circuit breaker in the distribution network. Higher-level goals, such as the maximum use of local feed-in from renewable energy plants or compliance with specified voltage limits in the power grid, are pursued by the agents in a cooperative manner as a self-organising swarm. In doing so, the agents in the swarm negotiate independently among themselves what concrete contribution they can and want to make to the higher-level goal, considering their local goals and user preferences.

For the social acceptance of highly autonomous systems, it is essential, especially in the field of energy supply, that users can trust the system. Trust in technical systems has a multitude of complex, socio-technical facets. In our work, we focus on the aspects of explicability of decisions, data sparseness in swarm-based optimisation and the verifiability of desirable system properties in order to meet the requirements and needs of human users in a trustworthy manner. We are working intensively with the Energy Informatics group and the Digitalised Energy Systems group at the Carl von Ossietzky University in Oldenburg.

Explainable and self-explaining systems

A power grid agent decides in a critical situation to disconnect a certain section of the grid from the grid, cutting off power to hundreds of households. A battery storage swarm decides at an inopportune moment to fully load the storages, causing short-term economic disadvantages for the storage owners. In both cases, the question arises whether the decisions and the resulting actions of the agents were justified (e.g., to avoid major damage to the power grid), or whether it was an erroneous decision - or even malfunction - of the system. Especially in self-x systems, which do without a higher-level, central control instance, answering this question is not easy.

The highly topical research branch of Explainable Artificial Intelligence (XAI) is intensively concerned with being able to explain the decisions of individual agents based on the use of neural networks. Building on this work, to which the Power Systems Intelligence group of the Energy Division at OFFIS is also dedicated, we are investigating the possibility of explaining collective swarm behaviour. Hundreds of individual decisions may interact in an emergent way: The globally perceivable decision and the globally observable behaviour of a self-x system are composed of the local decisions and the local behaviour of the individual agents and their interaction. In this context, we are still at the very beginning of answering the question of how the behaviour of a self-x system can be explained. Initial conceptual approaches are based on the use of an external observer in the sense of the observer concept from organic computing; in the long term, however, the self-x system is to be put in the position of being able to independently generate transparent and comprehensible explanations for its behaviour and decisions in the sense of self-organisation.

Data-saving and privacy-preserving optimisation

Distributed algorithms and coordination procedures in agent systems are often based on consensus building. In order to reach an agreement on joint actions, the agents send each other messages with different options for action. For example, in a smart city district that is connected in terms of energy or in a virtual power plant with various individually operated systems, information about different electricity generation or consumption profiles is exchanged. Often, data on prices or costs are also required. Such information can be aggregated and further processed with suitable artificial intelligence methods to derive at least approximate data about internal structures and (business) processes.

We have already been able to demonstrate this data protection problem for operating and working hours by evaluating heating profiles, machine utilisation and individually negotiated, time-variable tariffs with the suppliers. In a similar way, it is also possible to determine the attendance times in private homes. Distributed agent systems will only gain acceptance in the long run if private data - especially sensitive business data - either do not have to be used for the fulfilment of the coordination task or at least do not have to be disclosed. To this end, we are developing approaches to encrypted optimisation that preserve privacy: Either only data that cannot be decrypted by other agents is revealed and computations are performed directly on the encrypted data, or the methods work according to the secret-sharing principle, where no agent alone possesses complete information and computations can only be performed in a jointly distributed manner. For this, the agent protocols used must be suitably adapted. The greatest challenge here is to maintain sufficient performance in the solution of the coordination task.

Verification of self-x-systems

The verification of properties of a self-x system is desirable and particularly necessary for use in critical infrastructure. A distinction can be made here between functional and formal verification. Functional verification attempts to prove that a system meets a certain specification. This proof can be provided by various means, e.g., tests, simulation, or formal verification. Formal verification is the proof of the correctness of the system by mathematical methods.

Self-X systems often have properties that pose great challenges for the usual techniques of formal verification. These include a high degree of decentralisation, asynchronous interactions, dynamic adaptations of the system structure at runtime, the interconnection of diverse systems, and usually the use of heuristic optimisation methods. The core problem is usually that the state space to be investigated quickly becomes too large to calculate results (a so-called "state space explosion").

To deal with one or more of these properties, various methods have been developed in recent years that, for example, map the allowed state space via a function or formally aggregate the agents of a swarm system into an overall system. We are working on developing similar methods for the constraints necessary in energy systems, making them effectively usable and thus creating trust in a future decentralised energy supply.

Contact

![[Translate to english:]](/fileadmin/_processed_/2/7/csm__c__AdobeStock_ipopba_323829966_9d266996f8.jpeg)

Persons

F

H

Dr. rer. nat. Stefanie Holly

E-Mail: stefanie.holly(at)offis.de, Phone: +49 441 9722-732, Room: Flx-E

K

R

S

T

Dr. rer. nat. Martin Tröschel

E-Mail: martin.troeschel(at)offis.de, Phone: +49 441 9722-150, Room: Flx-E

Projects

2025

Edge-RZ

Machbarkeitsstudie: Systemdienliche Edge-Rechenzentren als nachhaltige und resiliente Infrastruktur

Duration: 2025 - 20262024

2023

NFDI4Energy

National Research Data Infrastructure for the Interdisciplinary Energy System Research

Duration: 2023 - 20282022

DEER

Dezentraler Redispatch (DEER): Schnittstellen für die Flexibilitätsbereitstellung

Duration: 2022 - 2025 REMARK

Resilienz im digitalisierten Stromsystem: Toolbox zur Bewertung von Systemdienstleistungsmärkten

Duration: 2022 - 20242021

WWNW

WärmewendeNordwest – Digitalisierung zur Umsetzung von Wärmewende- und Mehrwertanwendungen für Gebäude, Campus, Quartiere und Kommunen im Nordwesten

Duration: 2021 - 20262020

2019

2016

Publications

2026

Agent-Based Flexibility Aggregation for a Distributed Redispatch

Radtke, Malin and Stark, Sanja and Holly, Stefanie; Energy Informatics; 2026

Resilience of Digitalized Power Systems-Challenges and Solutions

Brand, Michael and Stark, Sanja and Holly, Stefanie and Kamsamrong, Jirapa and Mayer, Christoph and Lehnhoff, Sebastian; Towards Energy System Resilience; 2026

2025

A Comprehensive Toolbox for Evaluating Reactive Power Market Designs

Holly, Stefanie and Pechan, Anna and Schulte, Eike and Palovic, Martin and Buchmann, Marius; 2025 IEEE Kiel PowerTech; June / 2025

A multi-agent approach with verifiable and data-sovereign information flows for decentralizing redispatch in distributed energy systems

Heess, Paula and Holly, Stefanie and Körner, Marc-Fabian and Nieße, Astrid and Radtke, Malin and Schick, Leo and Stark, Sanja and Strüker, Jens and Zwede, Till; Energy Informatics; February / 2025

Amplify: Multi-purpose flexibility model to pool battery energy storage systems

Paul Hendrik Tiemann, Marvin Nebel-Wenner, Stefanie Holly, Emilie Frost and Astrid Nieße; Applied Energy; 2025

Communication Modeling Approaches in Energy System Applications: A Systematic Overview

Radtke, Malin and Frost, Emilie and Nieße, Astrid and Lehnhoff, Sebastian; IEEE Access; 2025

Effect of the Quantity and Penetration of Energy Resources on the Incentivization of Grid-Beneficial Behavior in Prosumer Households

Sager, Jens and Wegkamp, Carsten and Niesse, Astrid and Engel, Bernd; ETG Kongress 2025; Voller Energie-heute und morgen.; 2025

Online Meta-Modeling of Communication Network Simulations for Agent-Based Energy Systems

Radtke, Malin and Lehnhoff, Sebastian; 2025 IEEE International Conference on Communications, Control, and Computing Technologies for Smart Grids (SmartGridComm); 2025

Simulative Analysis of Multi-Agent Systems in Energy Systems: Impact of Communication Networks

Radtke, Malin and Frost, Emilie; Proceedings of the 17th International Conference on Agents and Artificial Intelligence (ICAART 2025) - Volume 1; 01 / 2025

Ten Recommendations for Engineering Research Software in Energy Research

Ferenz, Stephan and Frost, Emilie and Schrage, Rico and Wolgast, Thomas and Beyers, Inga and Karras, Oliver and Werth, Oliver and Nieße, Astrid; Proceedings of the 16th ACM International Conference on Future and Sustainable Energy Systems; June / 2025